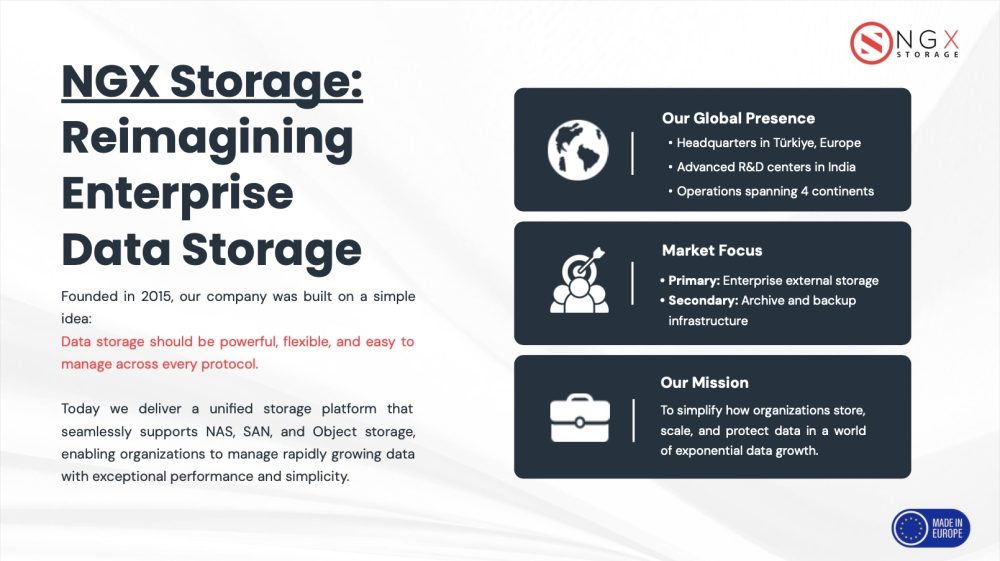

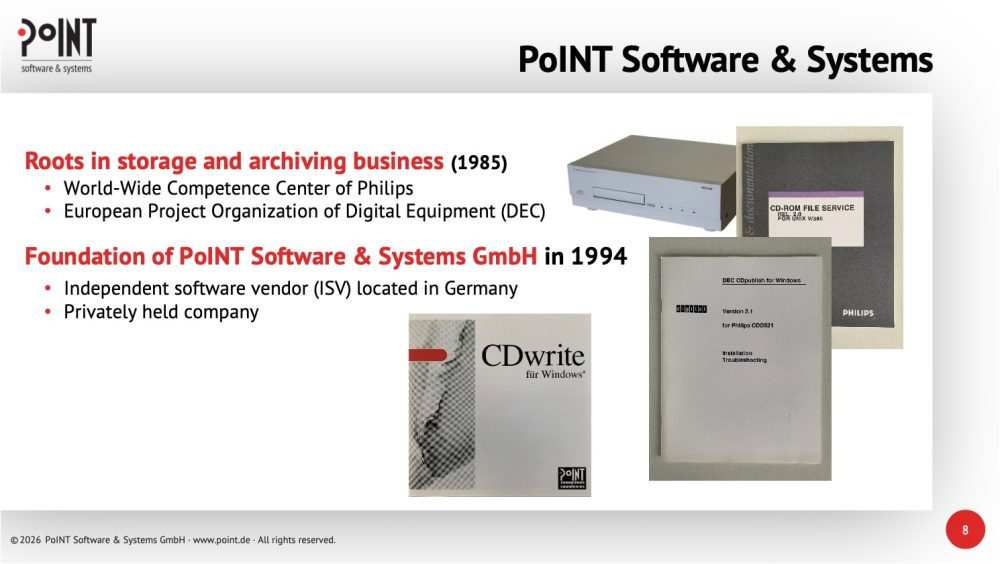

PoINT is a privately held German software vendor founded in 1994, with roots in storage and archiving dating back to 1985 through work with Philips and Digital Equipment Corporation. Certified as "Software Made in Europe" and recipient of the Storage Newsletter Cloud Storage Award 2026, the company's core mission centers on helping organizations manage data growth efficiently, reduce costs, and build cyber-resilient storage infrastructures.

The company frames its market relevance around five intersecting categories of pressure facing organizations today: explosive growth in unstructured data and migration complexity on the technical side; rising storage and energy prices on the economic side; data sovereignty concerns on the political side; compliance, archiving obligations, and cybercrime risk on the legal side; and CO2 footprints and e-waste on the ecological side. PoINT's response to all five centers on intelligent data tiering, placing the right data in the right place at the right time, with a strong emphasis on tape as a cost-efficient medium that consumes no energy when inactive and provides natural air-gapping against ransomware.

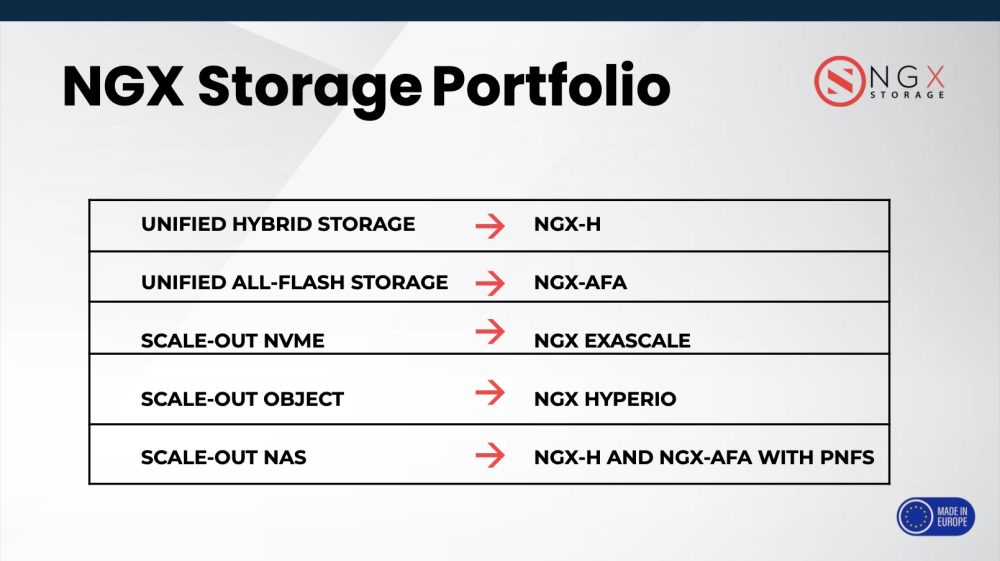

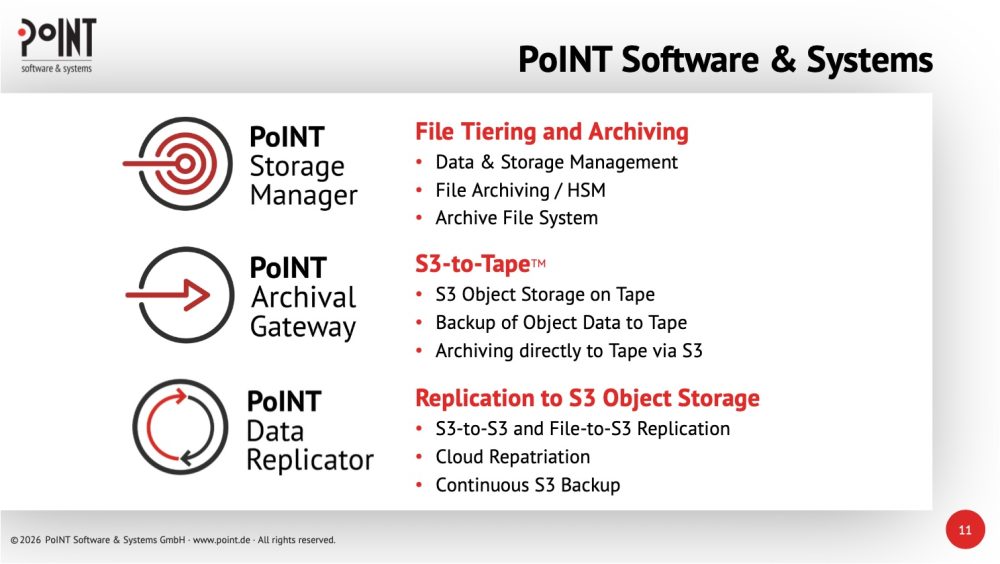

The company offers three main software products. The PoINT Storage Manager, launched in 2007, handles file tiering and archiving by moving inactive files from primary NAS systems to secondary storage including tape, optical, object stores, or public cloud, using policy-based rules while maintaining transparent access for end users. It counts over 200 installations worldwide, with a notable deployment at Daimler spanning multiple locations with WORM, versioning, encryption, and multi-tenancy. The PoINT Archival Gateway delivers S3-to-tape functionality, exposing an Amazon S3-compatible REST API while writing data directly to tape without intermediate disk layers, dramatically reducing costs compared to all-disk or public cloud approaches. Available in Compact and Enterprise editions, the Enterprise configuration scales to 32 interface nodes, 12 tape libraries, 384 drives, and 153.6 GB/s native throughput, with geo-distribution, automatic failover, and erasure coding across two sites. It is also packaged as the ORION S3, a turnkey system developed with BDT offering up to 392PB of native capacity. The PoINT Data Replicator handles backup and replication of object and file data to S3-compatible systems, supporting S3-to-S3 and File-to-S3 modes for use cases including cloud repatriation, legacy NAS migration, and continuous backup via Kafka and SQS change tracking.

Notable customers include Sixt, Daimler, Amgen, PostFinance, and EMBL-EBI, which deployed the gateway to archive Kubernetes workloads via S3 and achieve read/write throughput exceeding 1PB per week. Technology partners include HPE, NetApp, Fujitsu, Dell EMC, Cloudian, and Spectra, with resellers including SVA, Cristie, and Computacenter.